Functional. But built for machines to use.

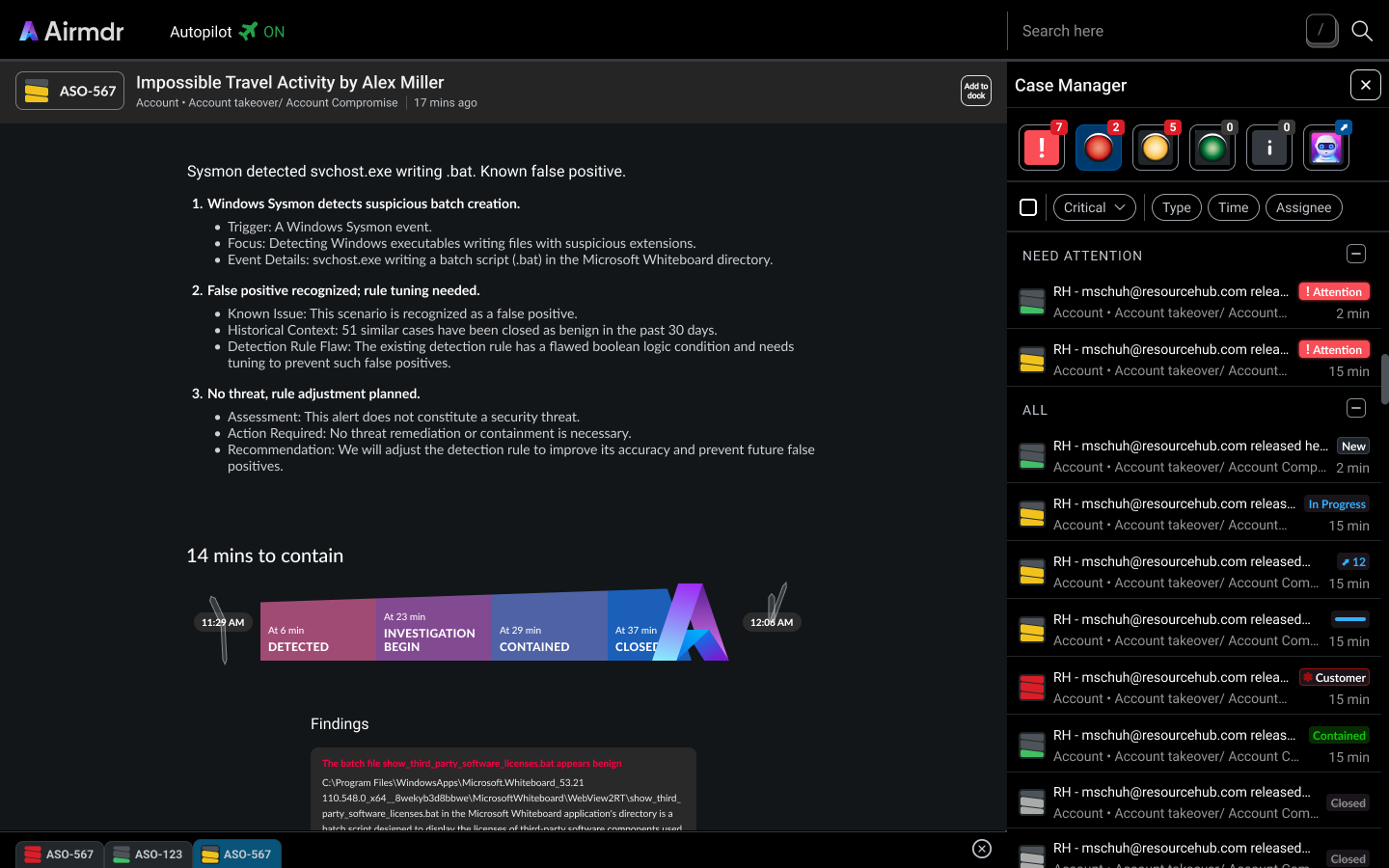

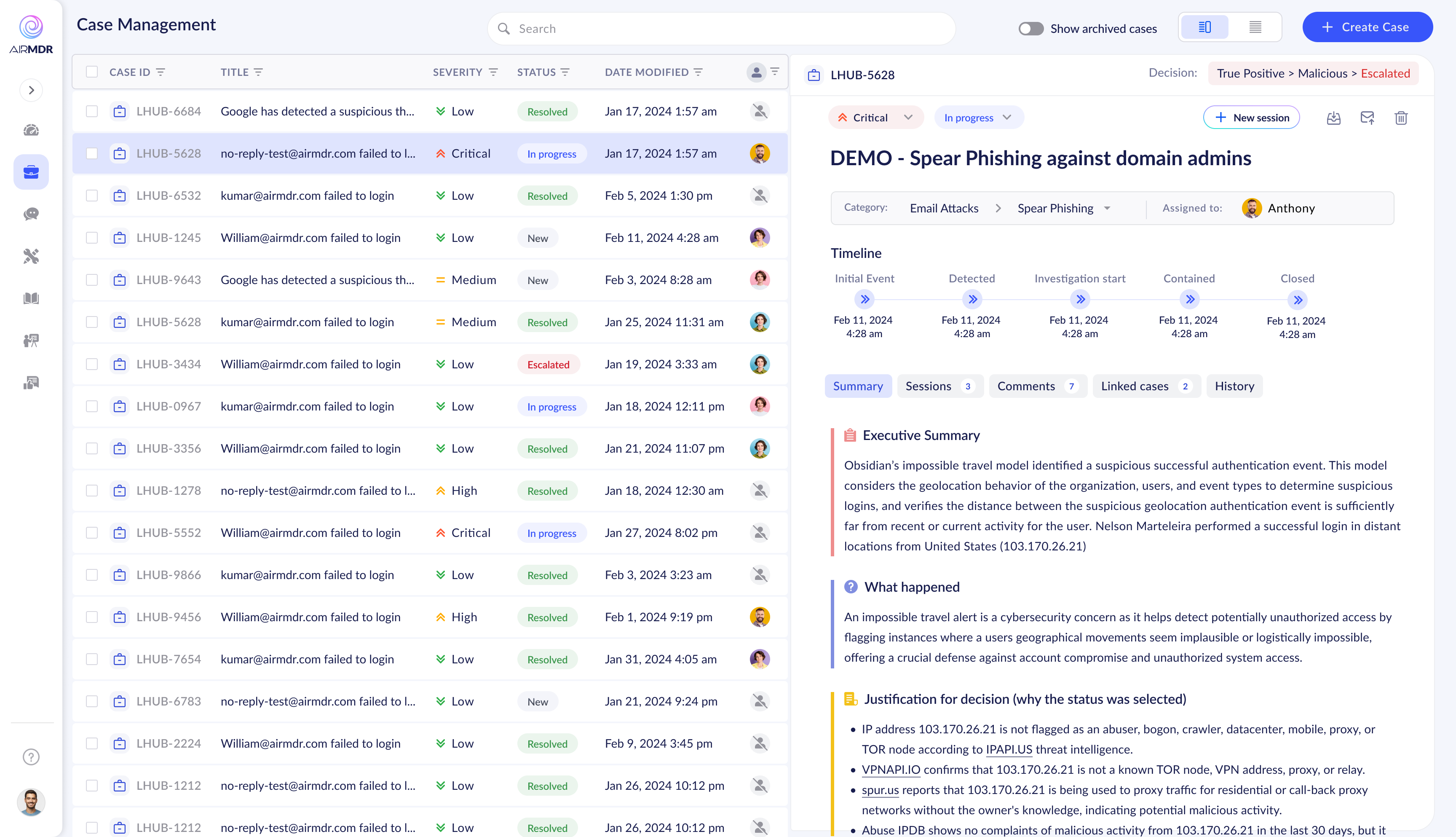

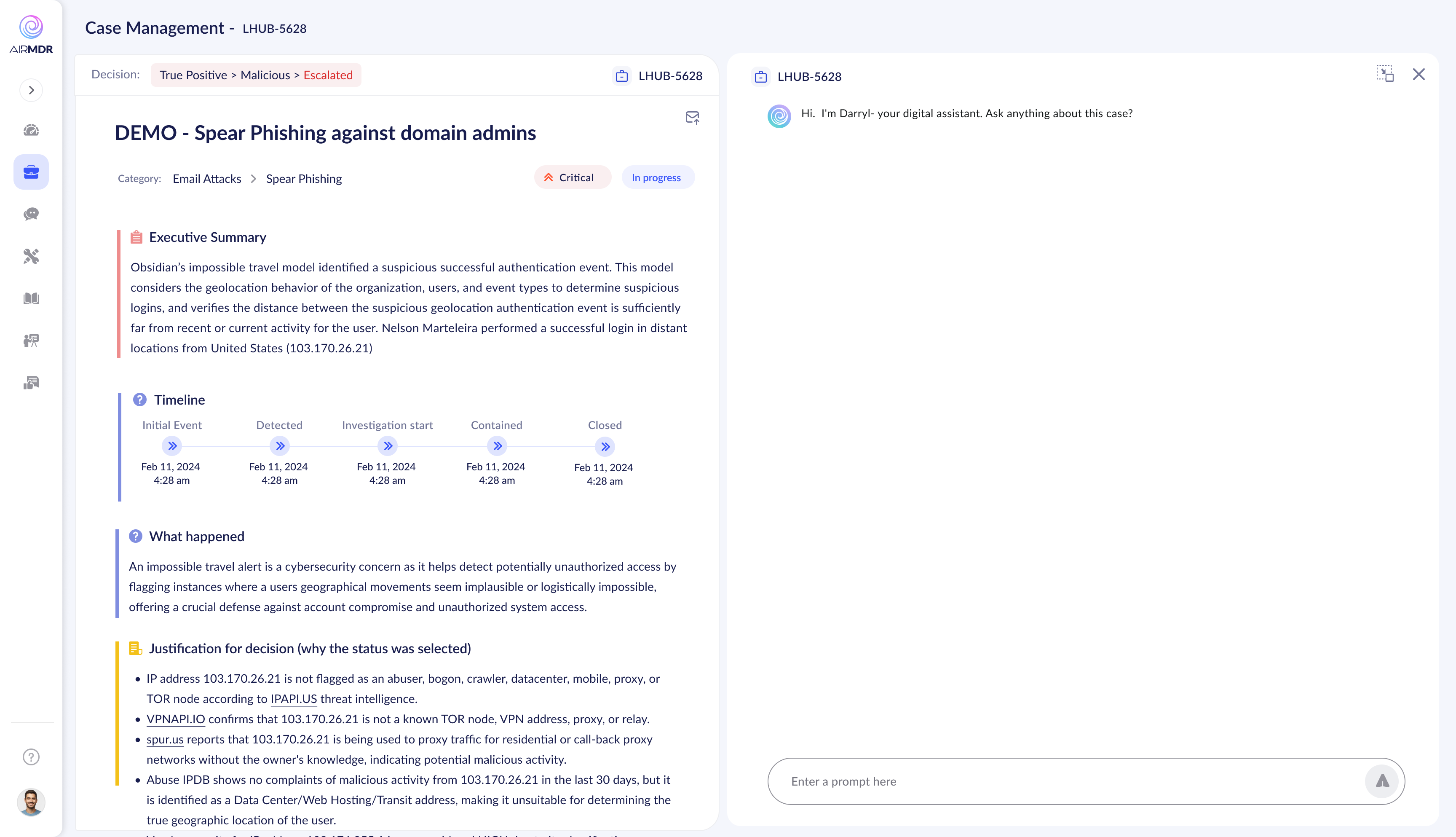

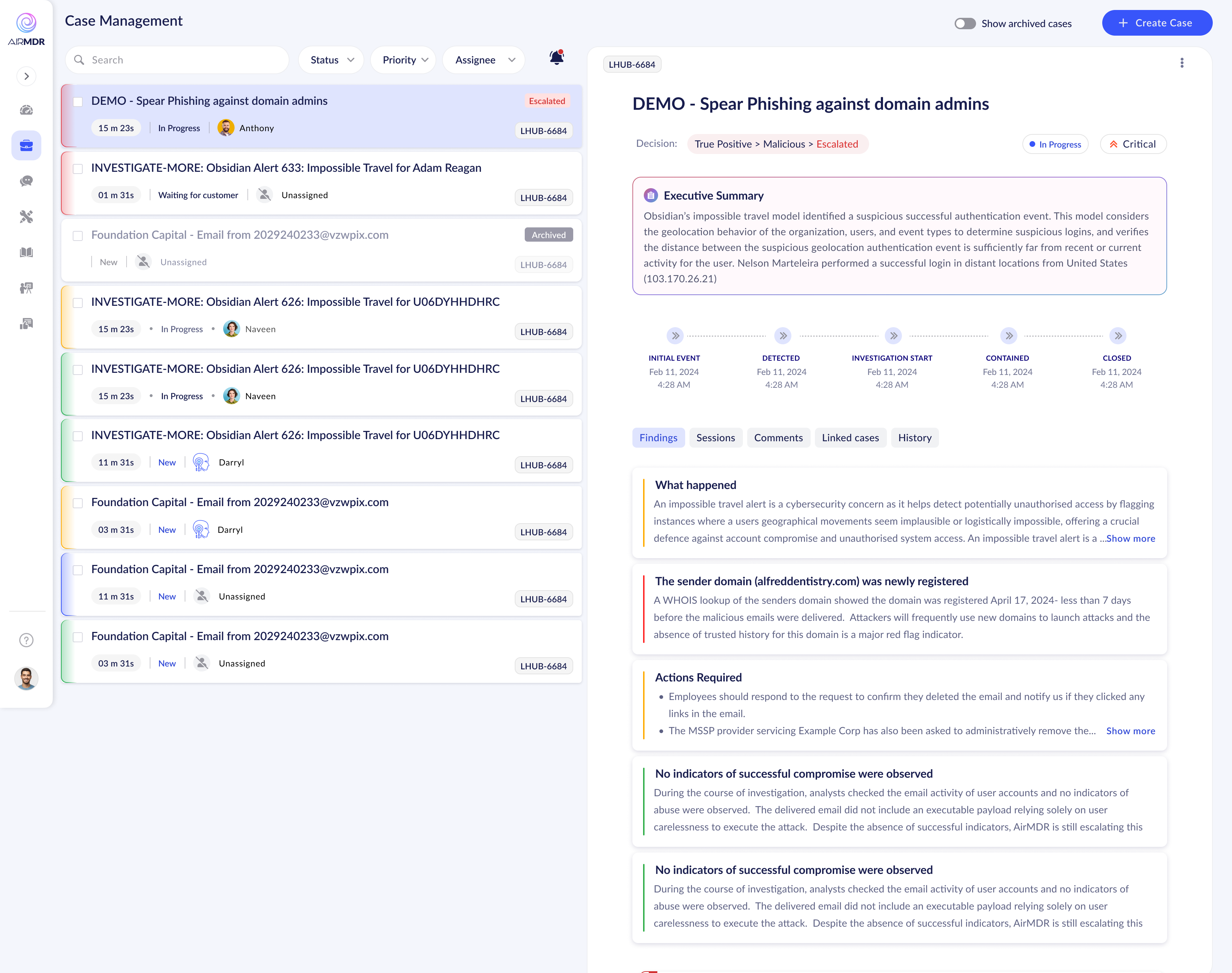

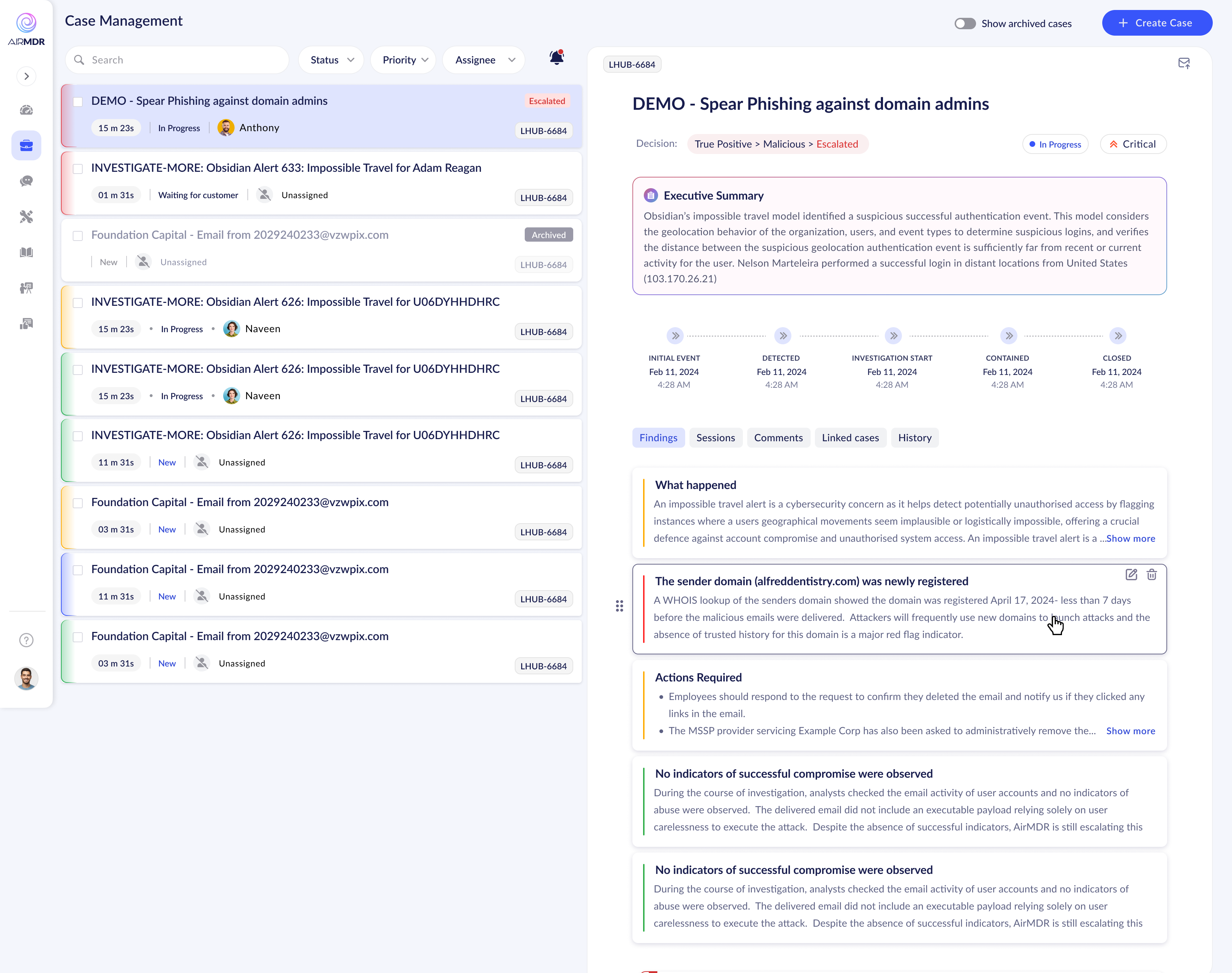

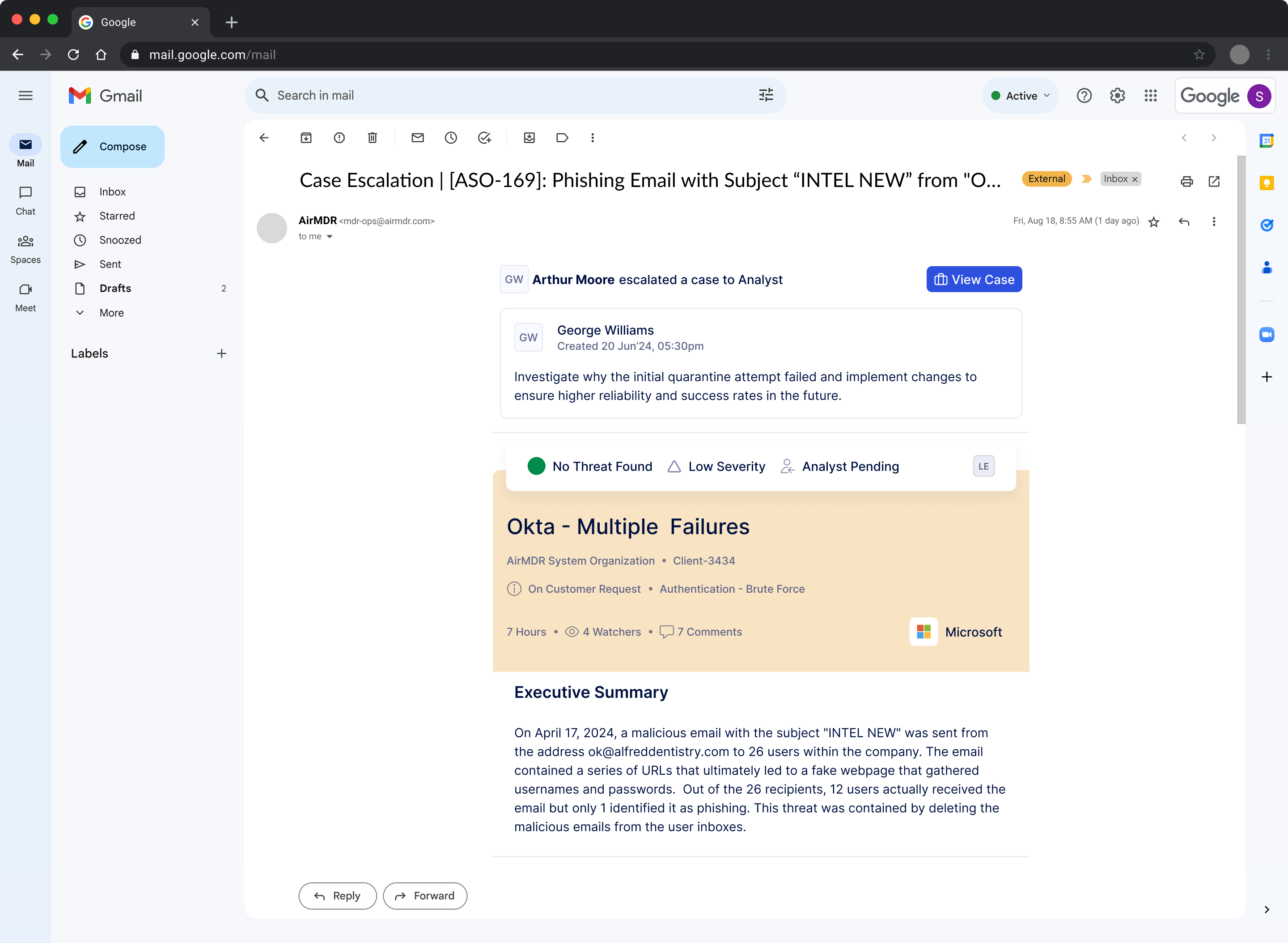

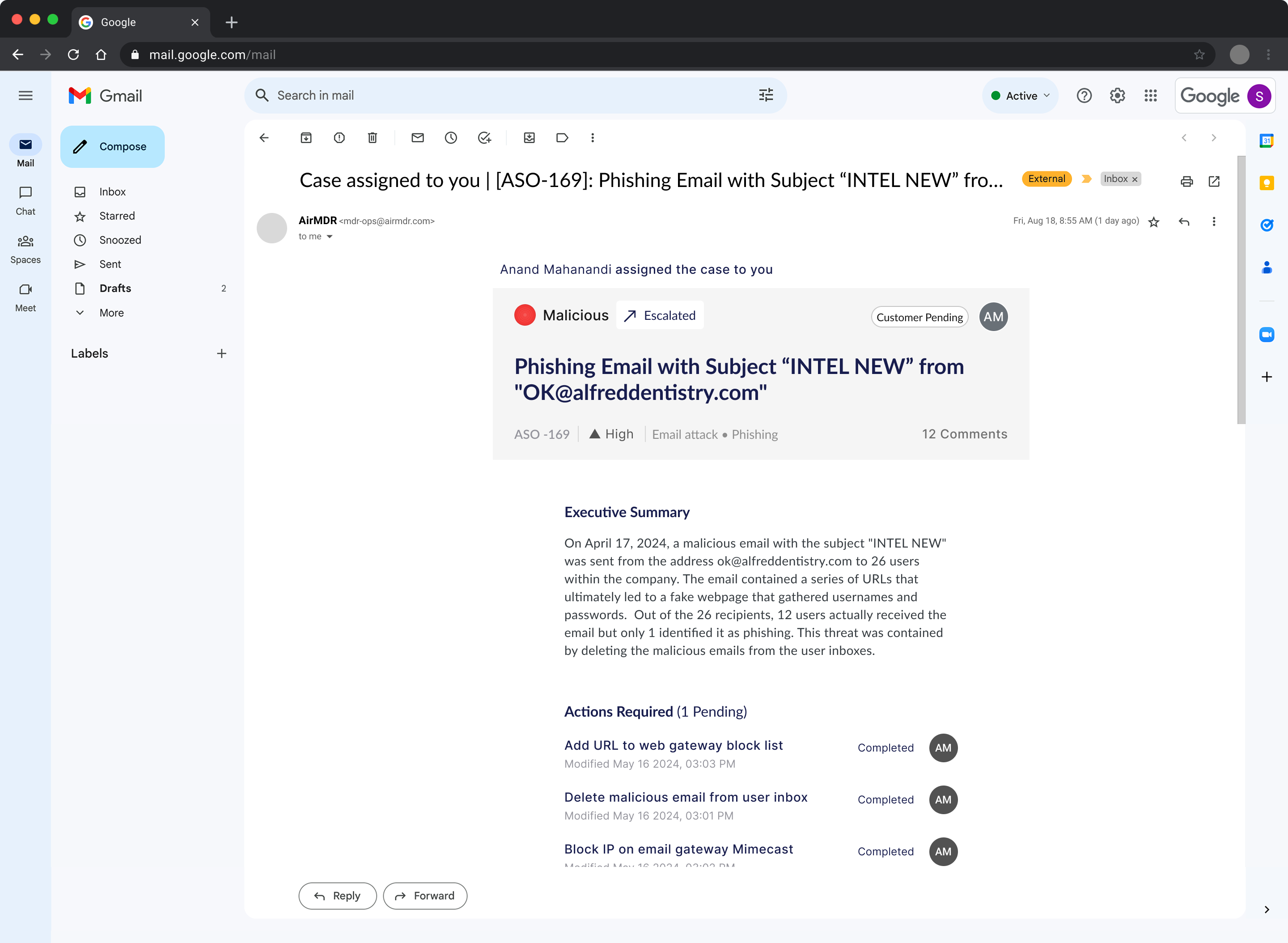

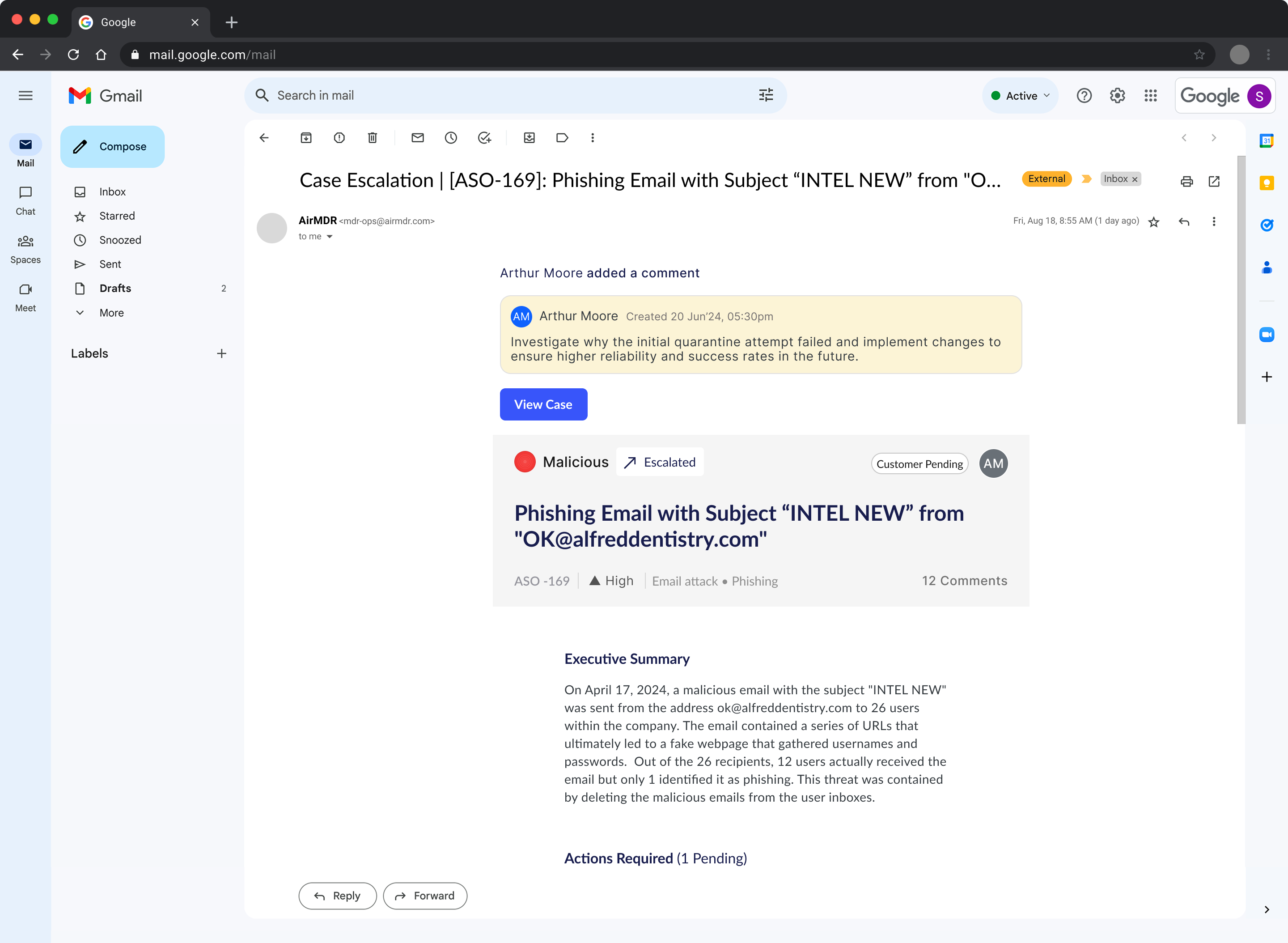

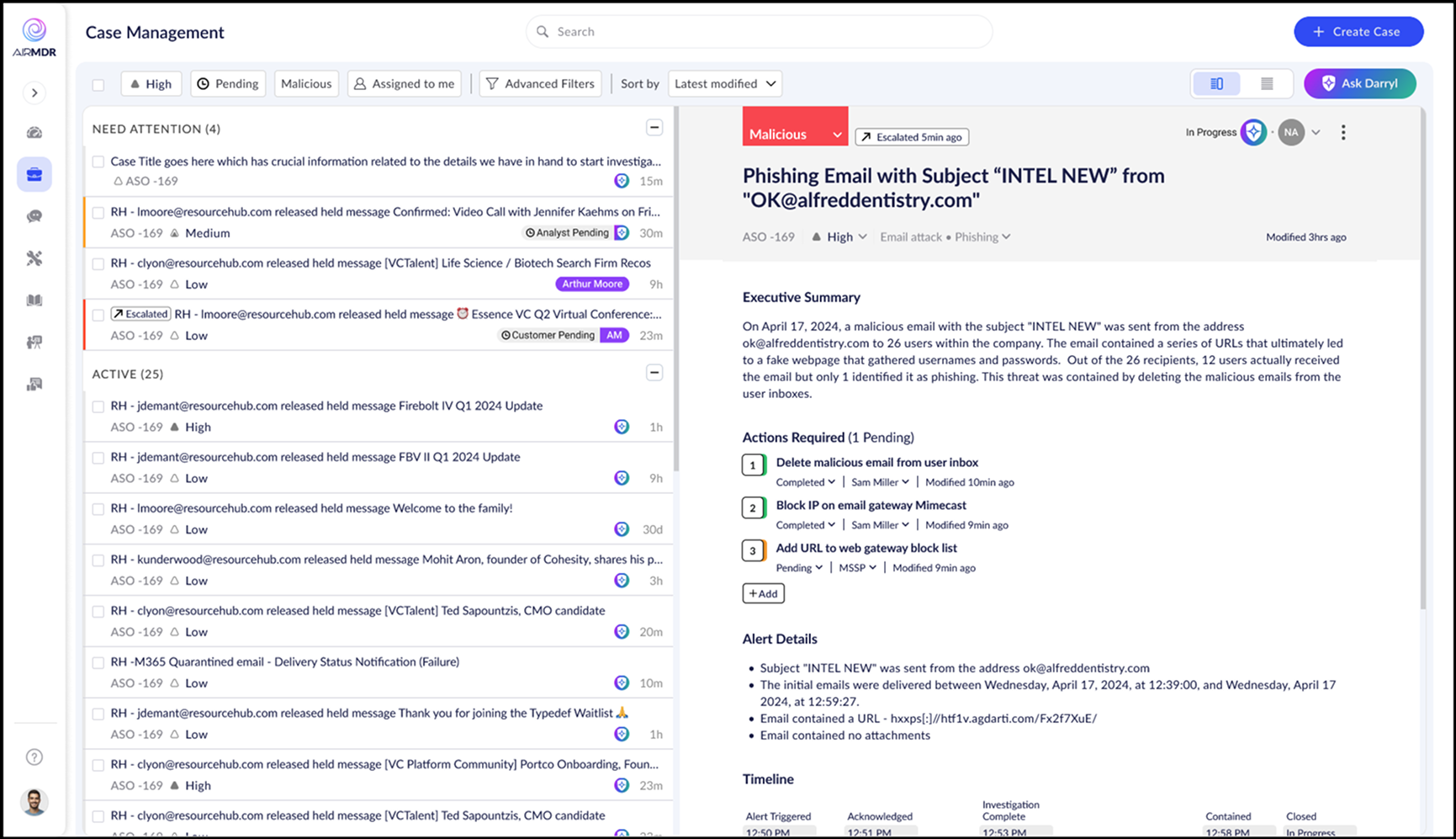

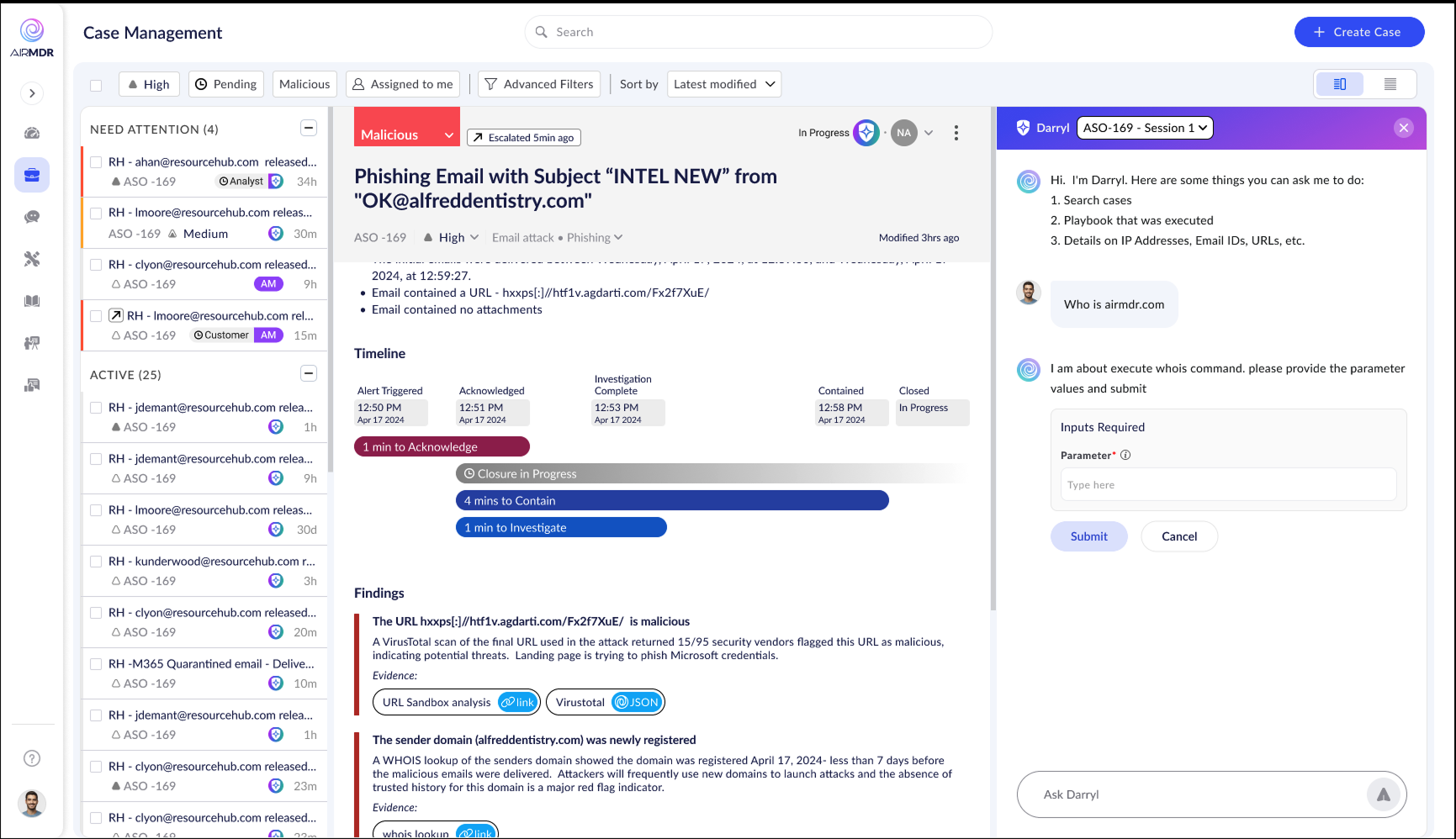

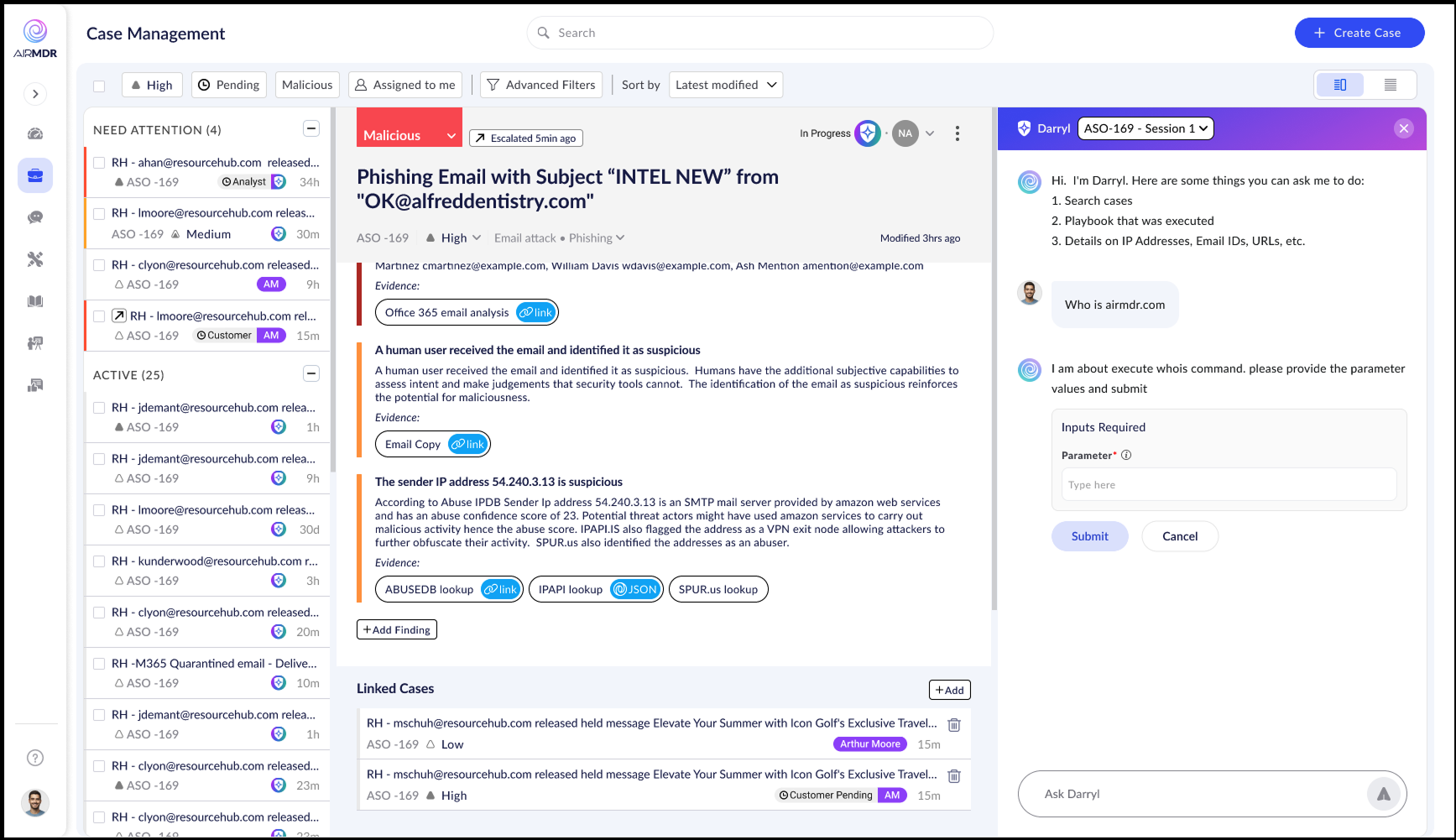

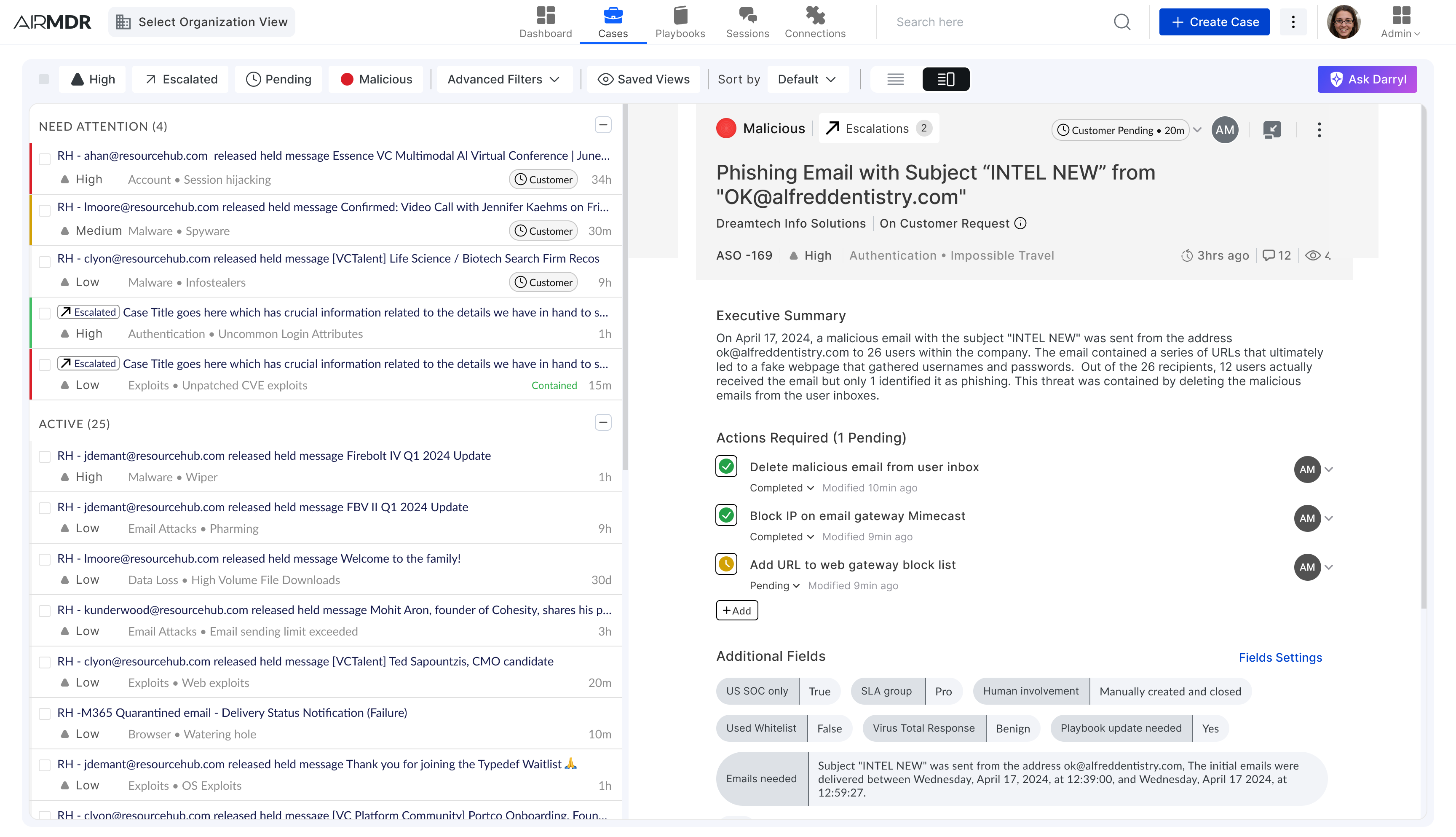

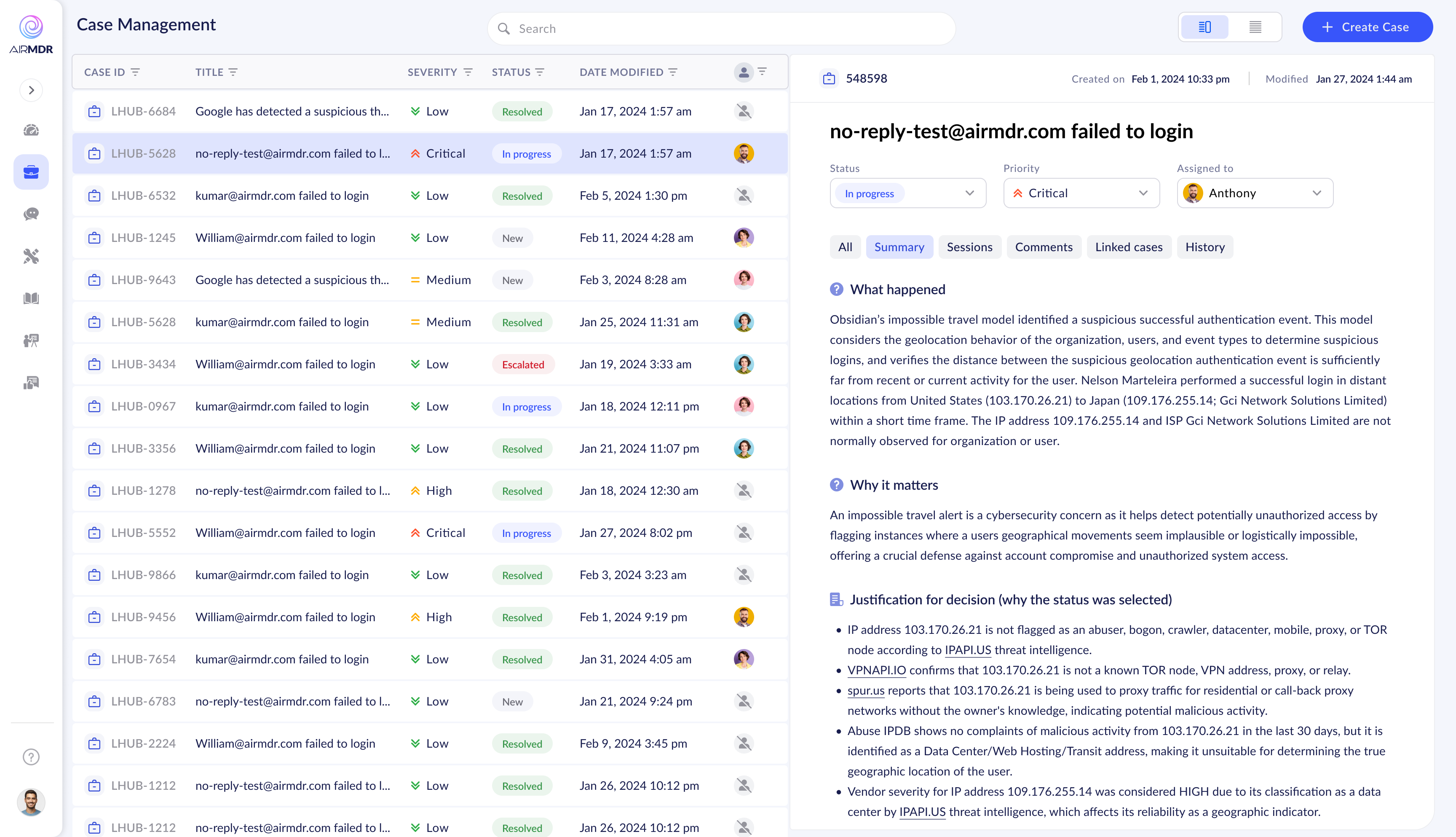

When I joined, the Case Manager worked — technically. AI-generated cases appeared, alerts were sorted, analysts got through the queue. But the experience of actually working through a case was laborious. Context-switching was constant. There was no clear mental model guiding the analyst from alert to resolution.

The data was all there. The problem was that users had to work too hard to extract meaning from it.

"The data was all there. The problem was that analysts had to work too hard to extract meaning from it — in an environment where every second of cognitive load costs real security outcomes."

Four core problems

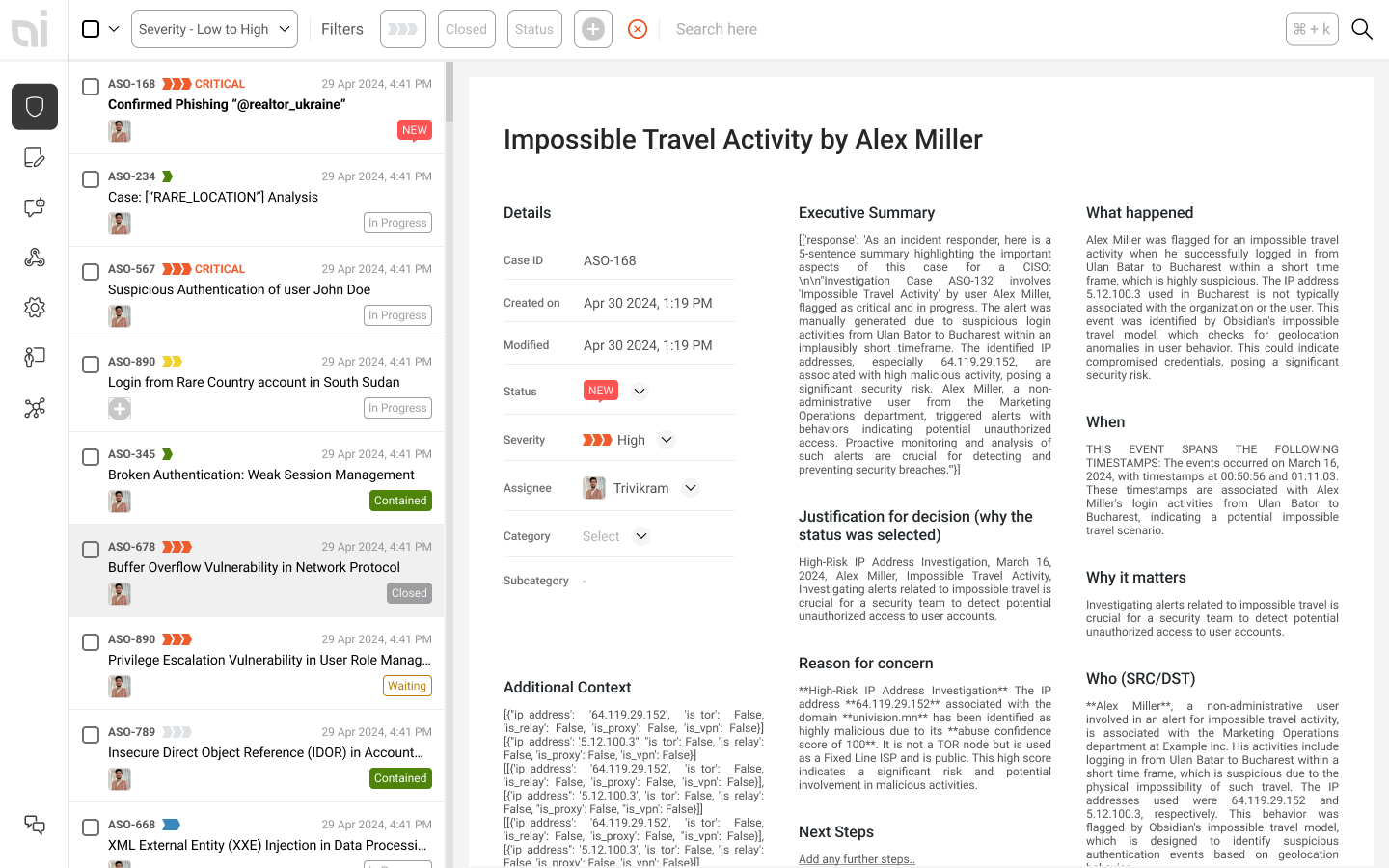

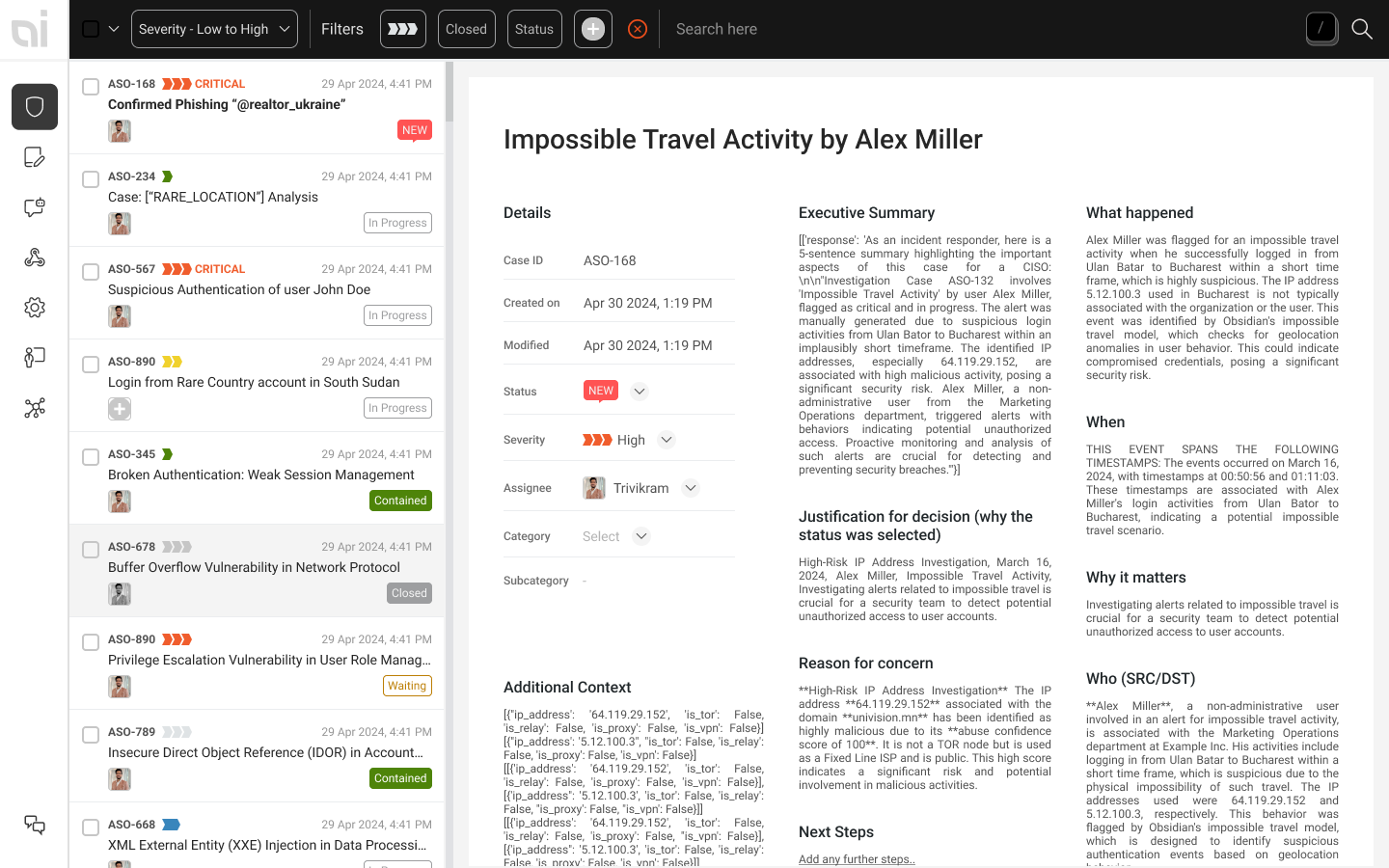

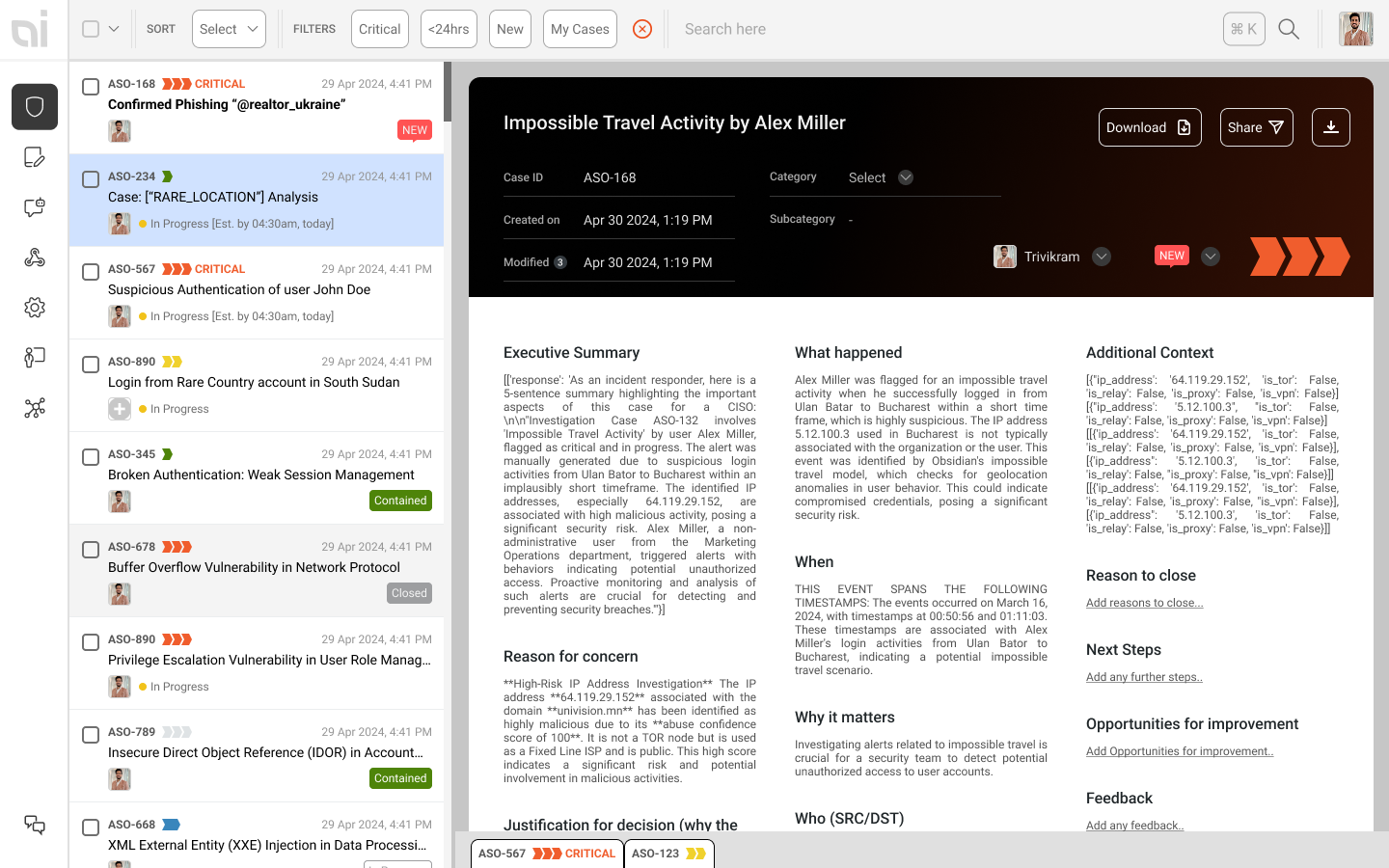

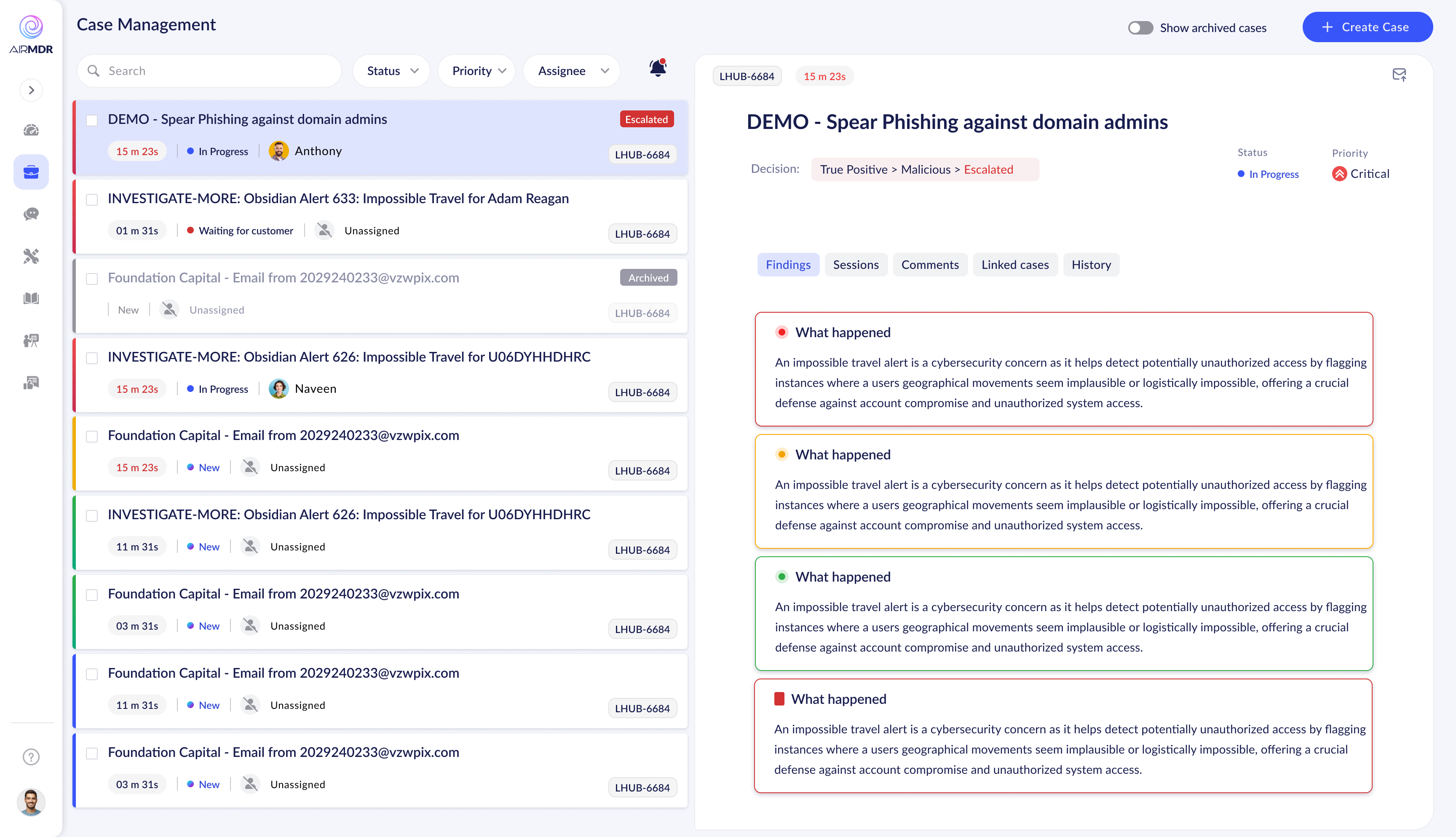

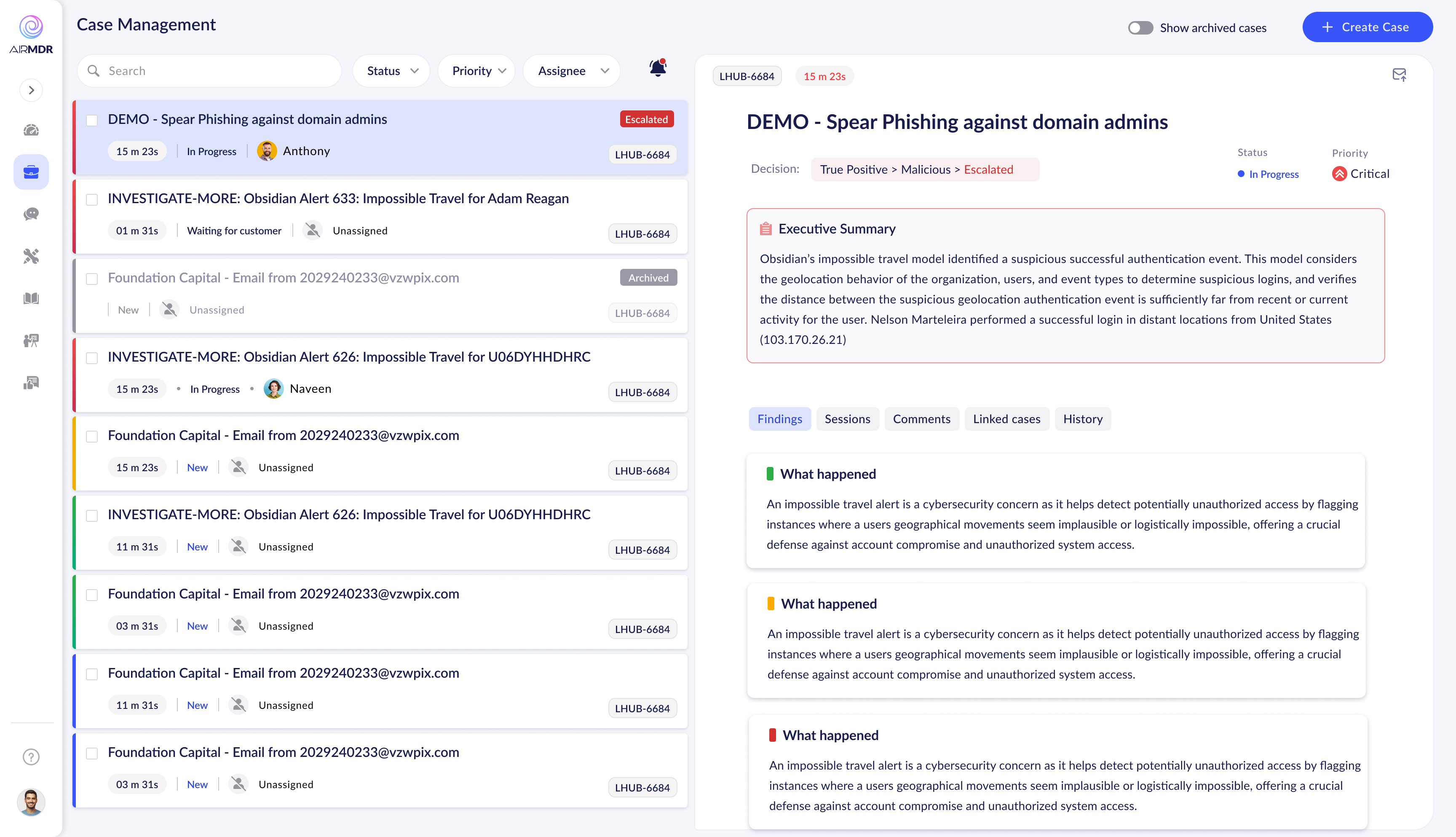

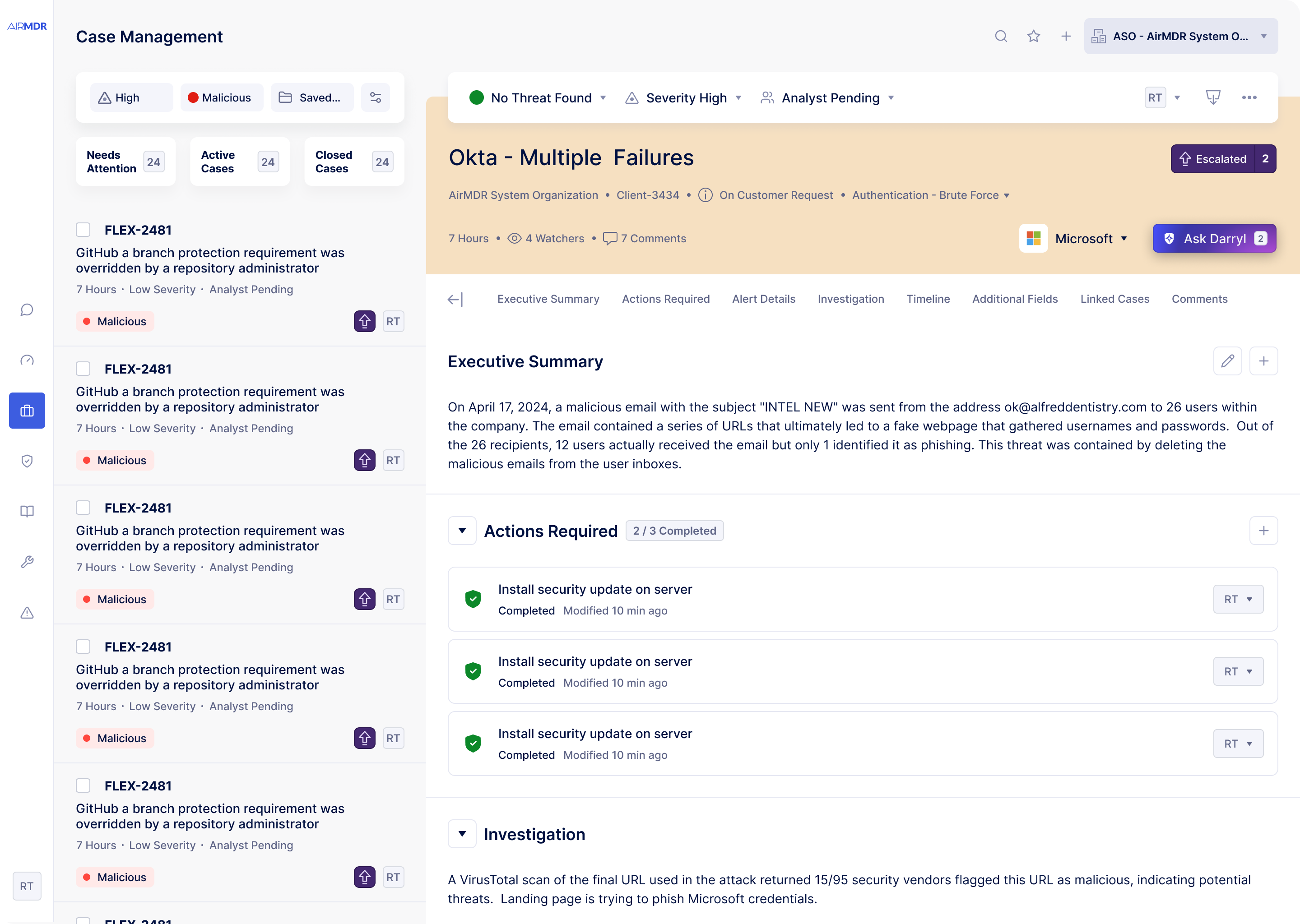

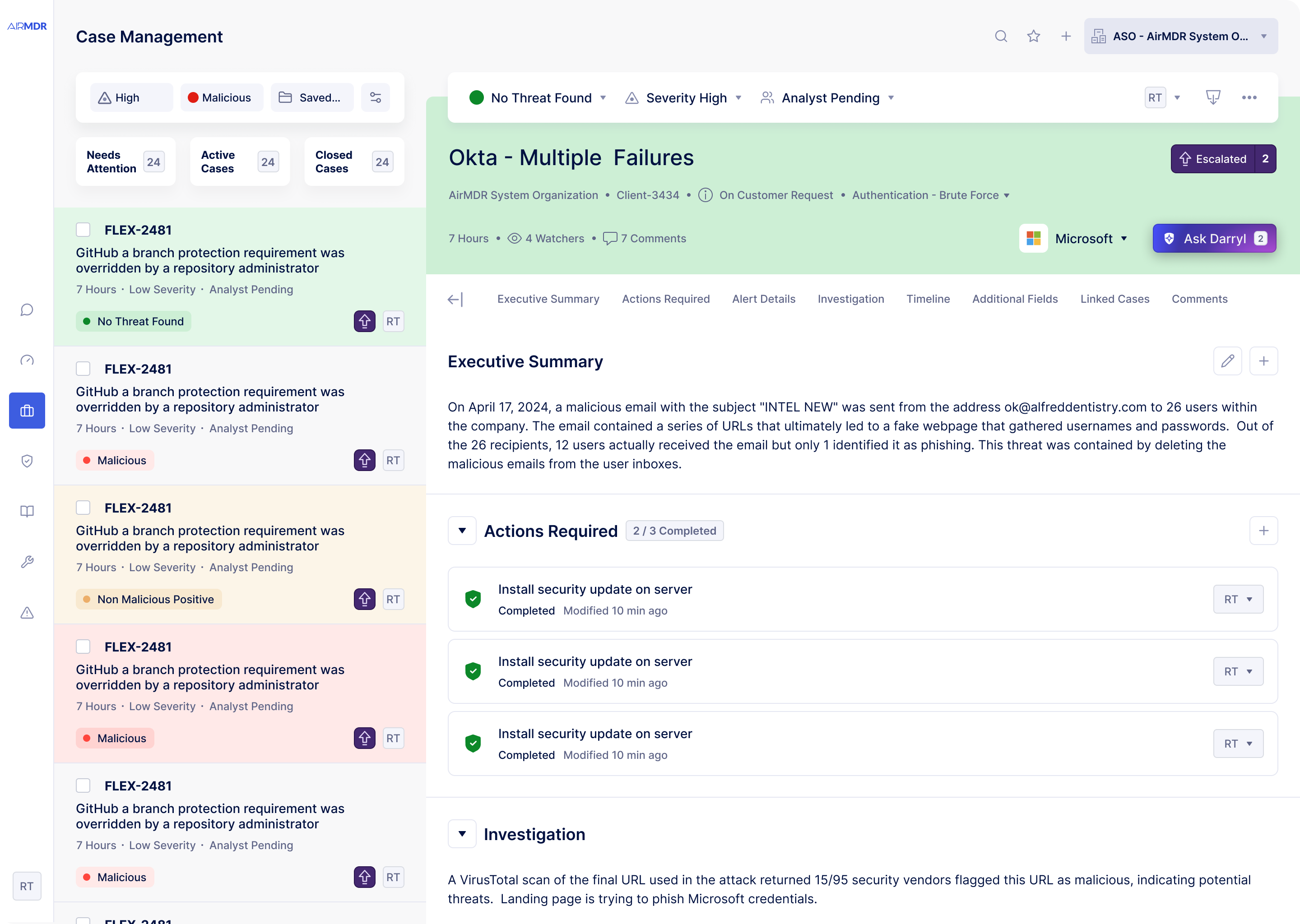

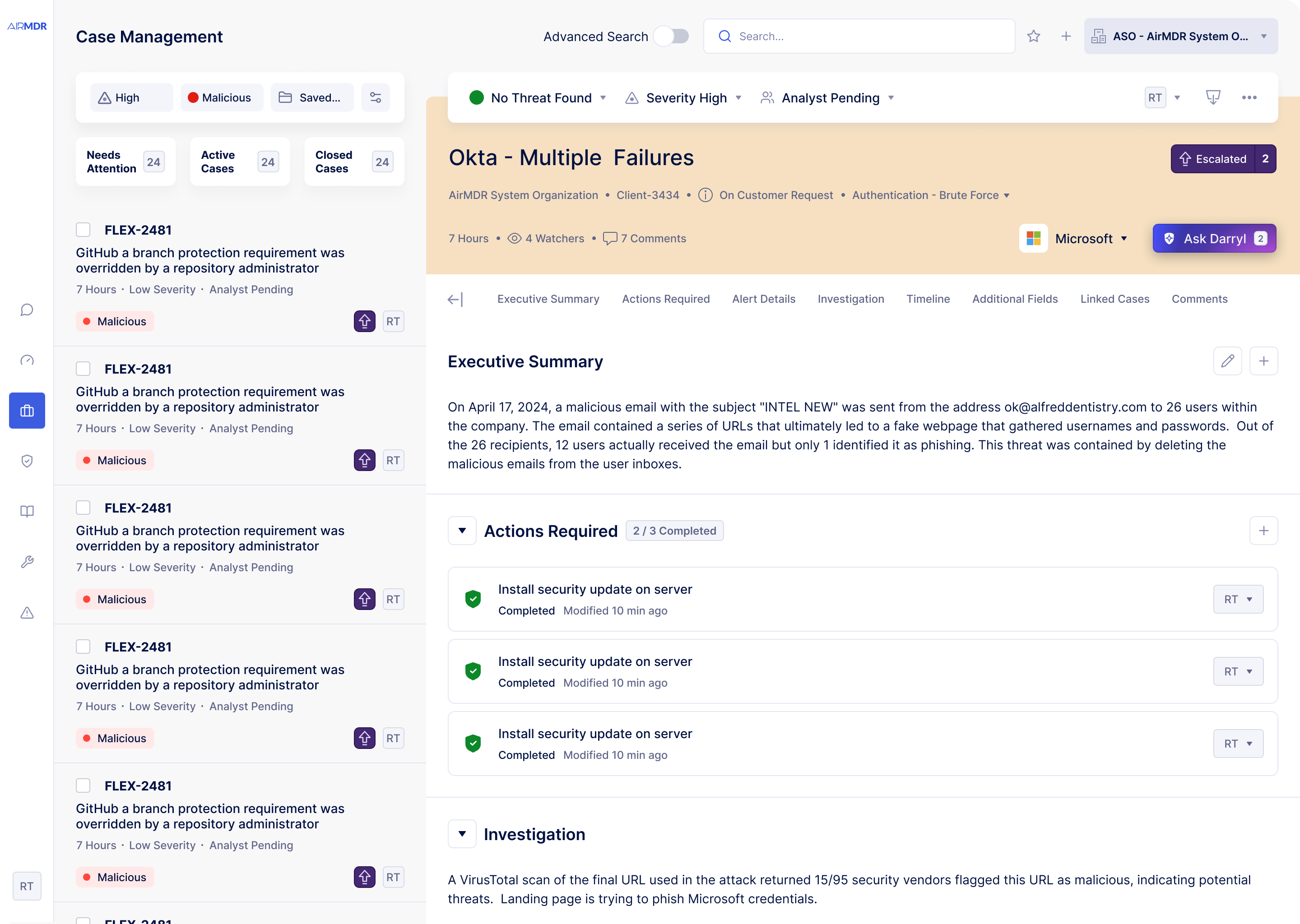

No information hierarchy

Critical escalations sat next to routine resolved cases with no visual differentiation. Analysts couldn't triage at a glance — everything demanded equal attention.

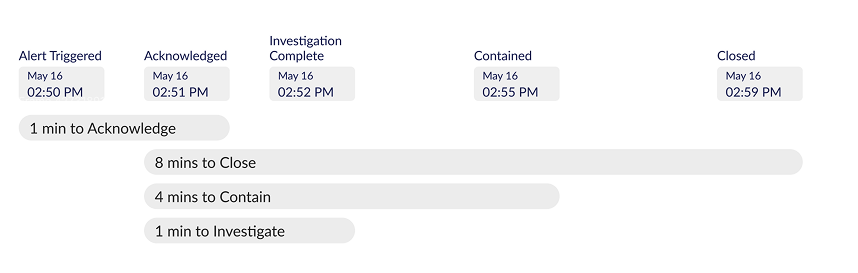

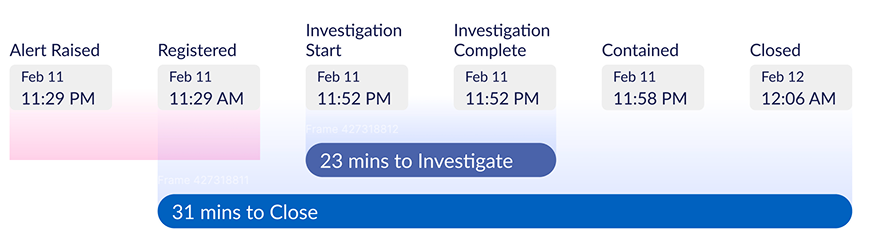

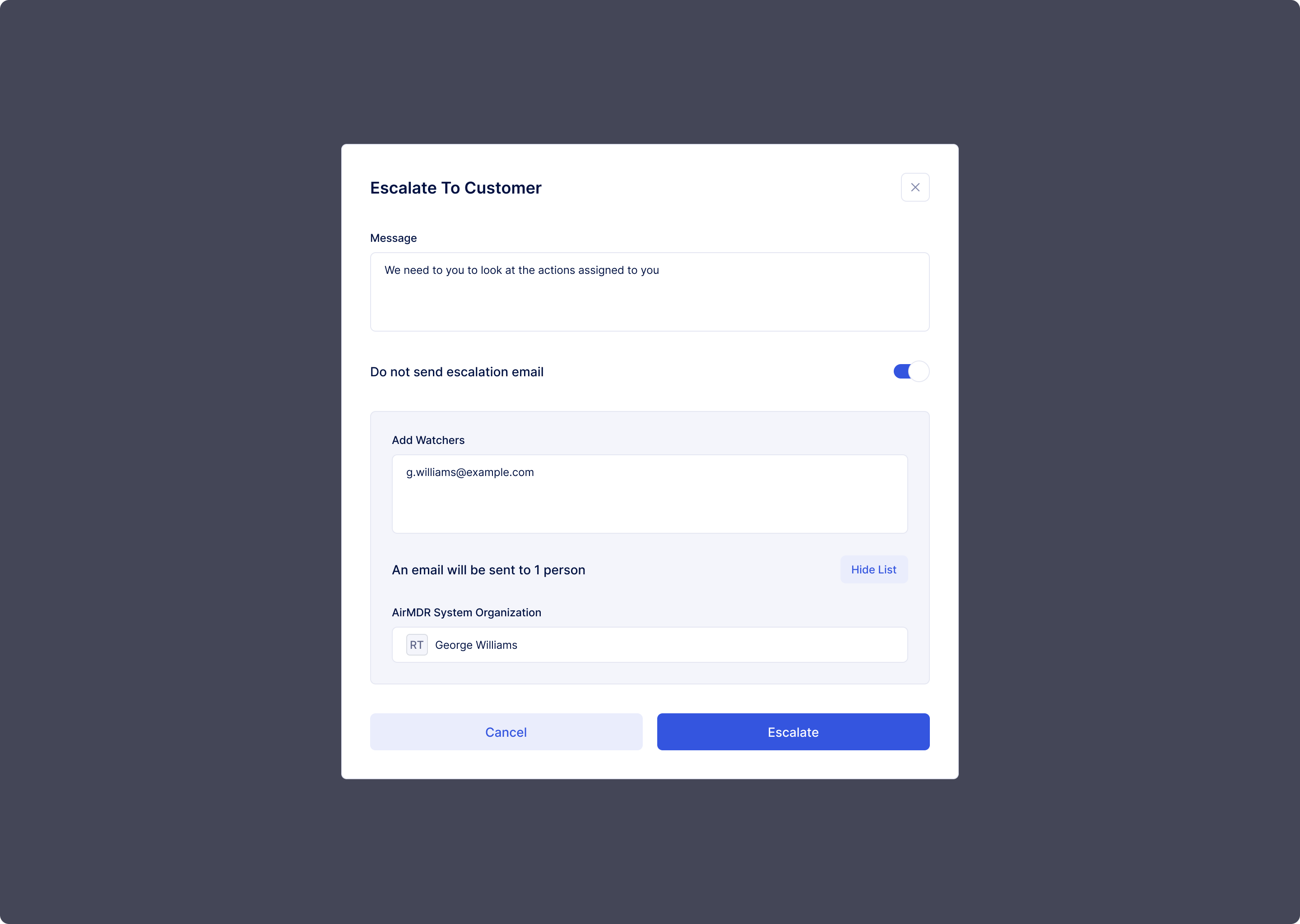

Broken case flow mental model

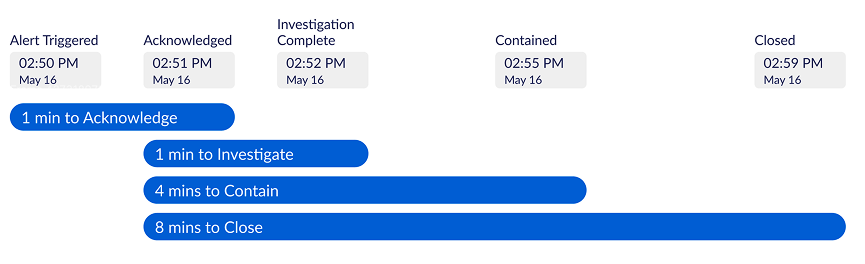

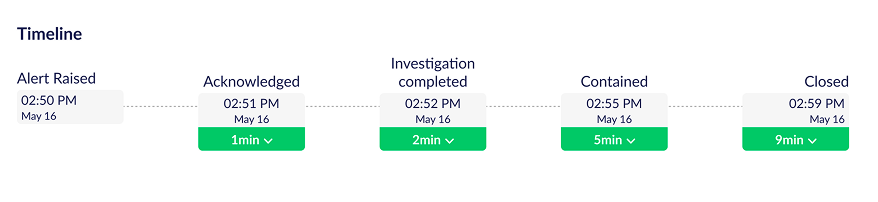

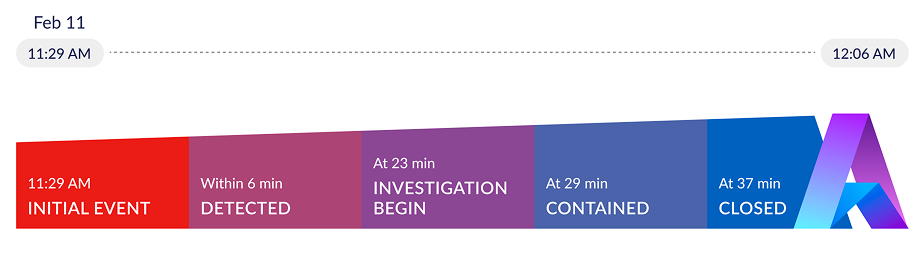

The path from "new alert" to "case closed" wasn't reflected in the interface. Analysts built their own workflows, leading to inconsistency and slower resolution times across the team.

Obsolete design system blocking development

A rigid, outdated component library required custom work for every new feature. Engineers were spending time on visual plumbing instead of product capabilities.

No familiarity anchors

The tool used patterns analysts had never seen in their daily work. In a high-stakes, time-pressured environment, that learning overhead had a direct cost on security outcomes.

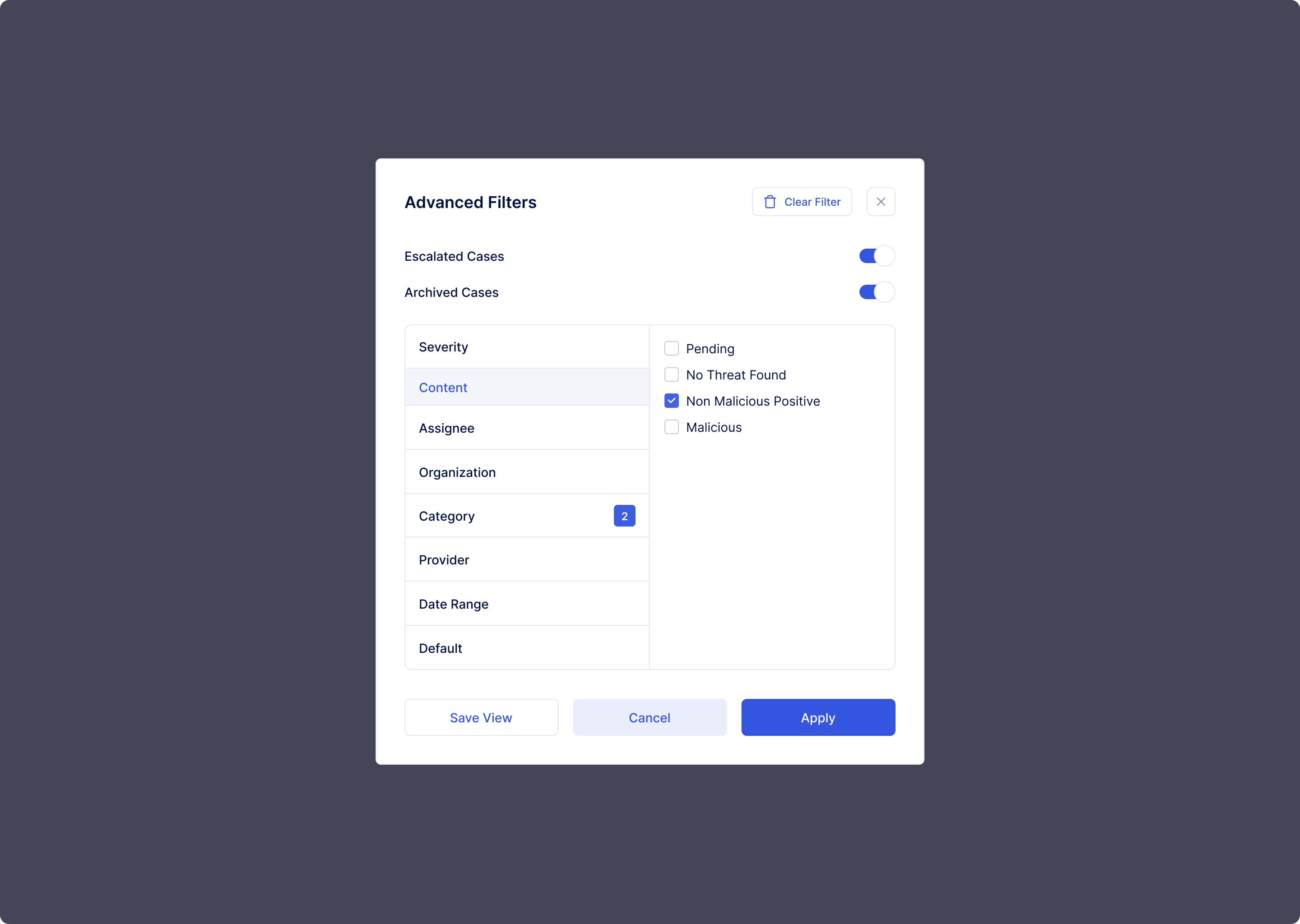

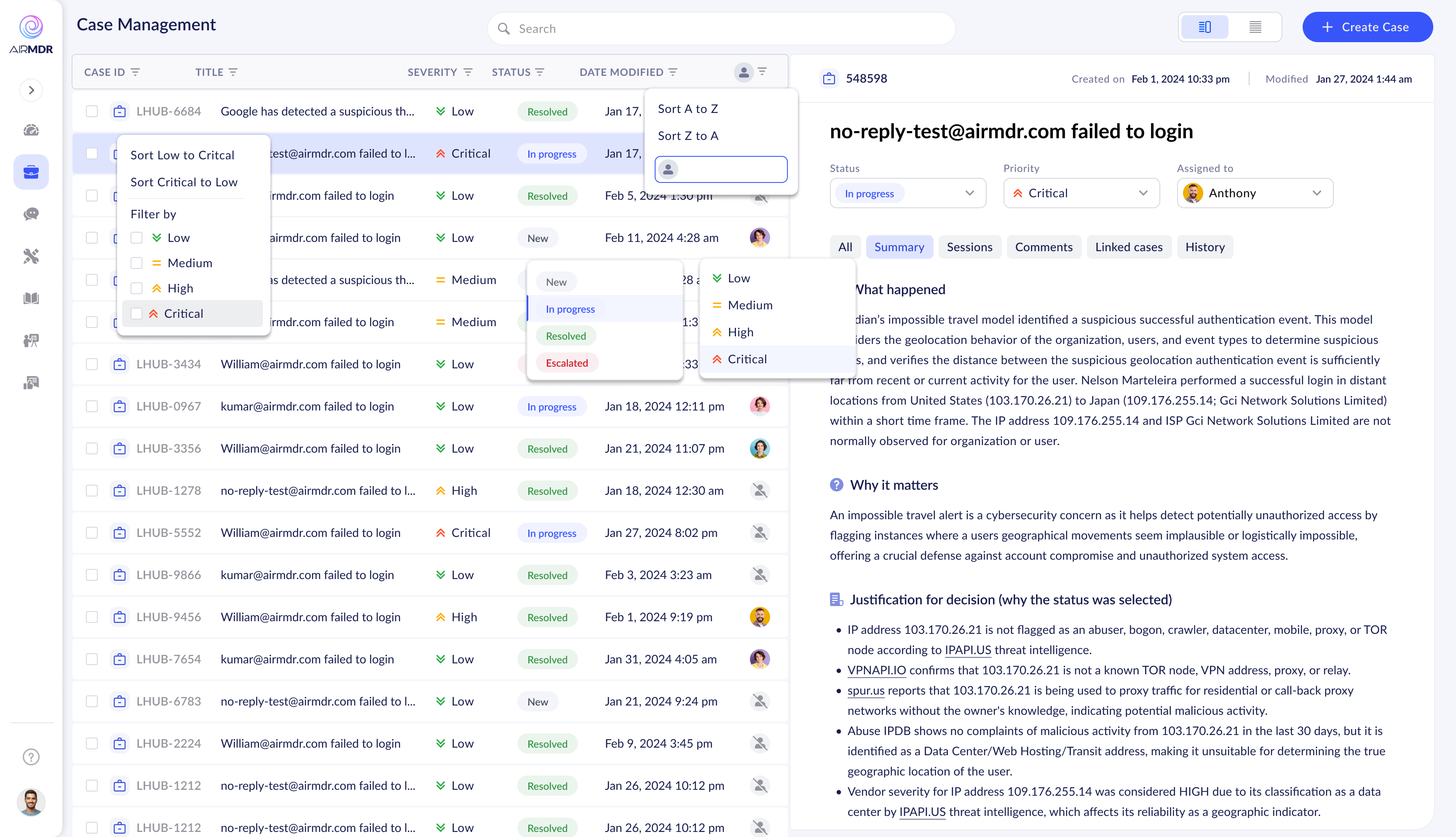

Cascading filter dropdowns — functional but unexplained affordances

Cascading filter dropdowns — functional but unexplained affordances

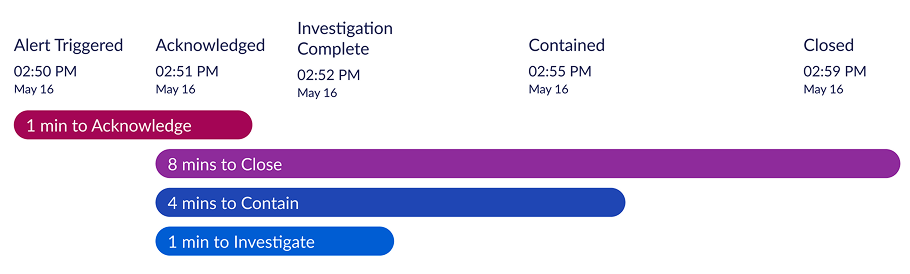

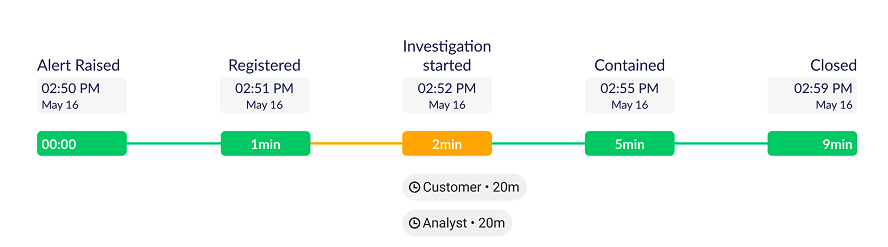

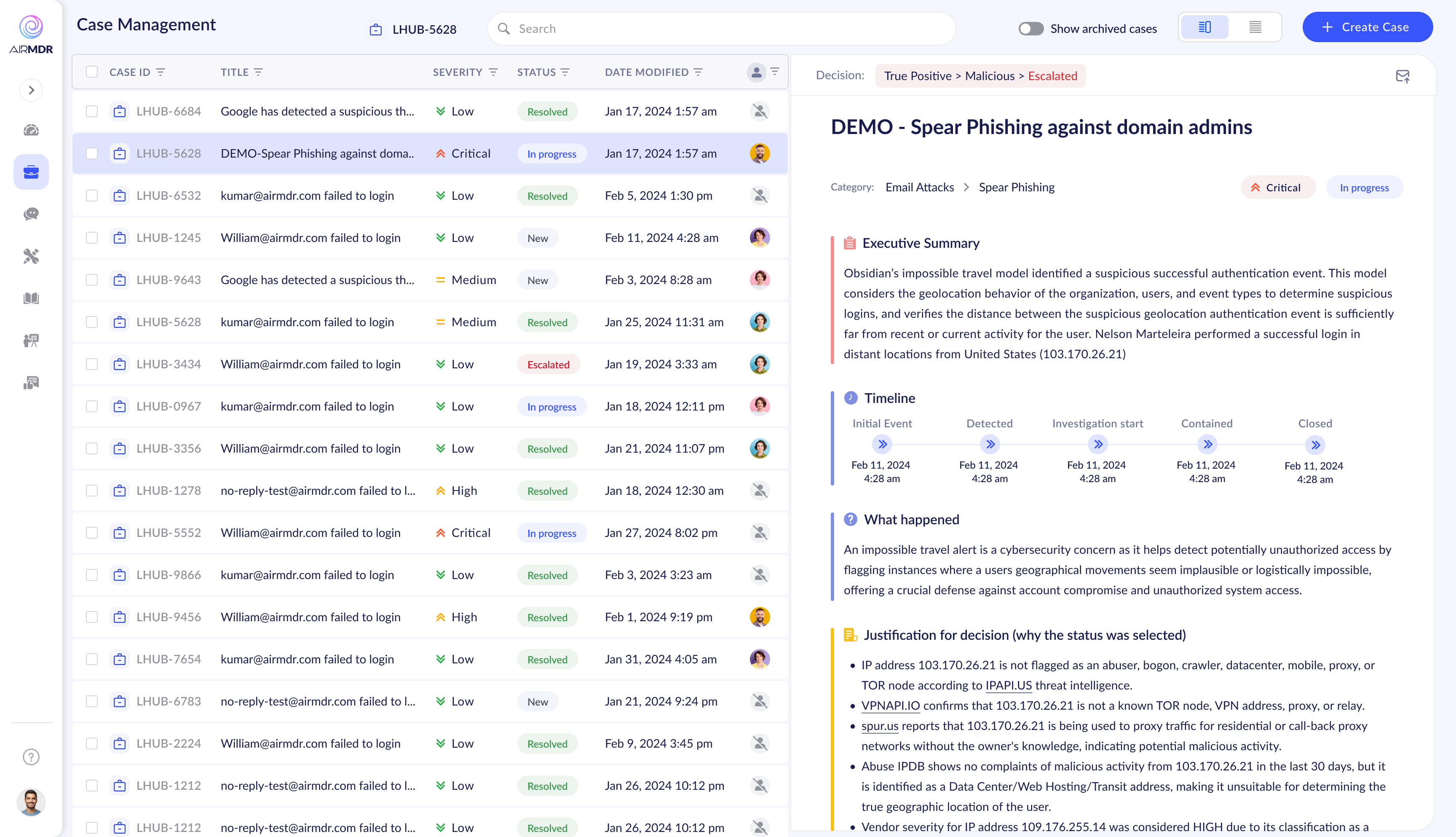

Early timeline in case detail — data present, hierarchy absent

Early timeline in case detail — data present, hierarchy absent